AI Use Survey Results: Why AI Usage Still Among Internal Auditors, Lags — and What Auditors Can Do About It

As the Internal Audit Collective’s many articles on AI topics and first eBook of Gen AI prompts show, enabling AI use is a huge focus for us. So we conducted a survey asking members about their current use and future goals. What we found was surprising.

AI Use Survey Results: Why AI Usage Still Among Internal Auditors, Lags — and What Auditors Can Do About It

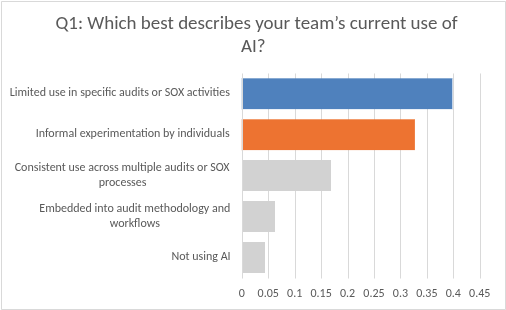

Of the 113 members who took the survey, less than 25% are using AI extensively. Approximately three in four are using AI in less than 25% of their audit projects.

Here’s the thing. Auditors who join the collective are typically interested in leveling up their teams, technologies, and methodologies. They’re often ahead of the curve in adopting new technologies.

So — if these results reflect current AI practices among high-performing teams — what’s going on? What’s happening in the rest of the profession? And what has to happen to meaningfully increase Internal Auditors’ AI adoption?

We sat down with Internal Audit leaders and Internal Audit Collective AI Advisory Working Group leaders Naaznine Chandiwala, Ashes Basnet, and Alan Maran to get some perspective. These leaders are on the front lines of the collective’s — and the industry’s — efforts to better understand how we can use and advise on AI in a responsible, sustainable way. Their responses reflect their general observations of the industry, except where specified.

Why Is AI Adoption Still So Low?

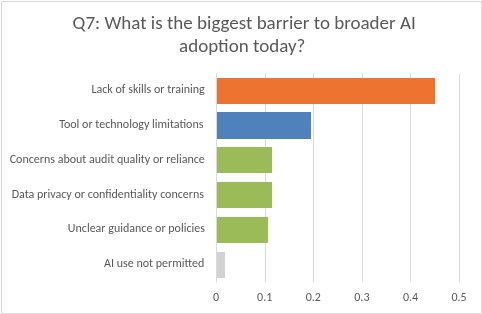

In many organizations, multiple barriers stand in the way of AI adoption. According to our survey, lack of skills or training is the #1 barrier preventing audit teams from adopting more AI into their work. Only 2 of 113 respondents reported prohibitions on AI use — gratifying to see.

What does the big picture look like for these organizations? How do these various hurdles manifest, and what can Internal Audit teams do to overcome them?

Naaznine, Ashes, and Alan all pointed to the same baseline answer: Most organizations still lack key foundational elements required for secure, scalable, structured, and programmatic AI adoption.

Below are the primary challenges they identified.

Governance and Technology In Transition

“I believe that certain key foundational elements required for anyone to use AI are still maturing. There are a lot of AI tools available, but enterprise-approved AI software that ensures data confidentiality, security, and other safeguards is still lacking in many companies. Not everybody has those tools available,” said Naaznine. “Companies have questions about governance. How secure is my sensitive data? What if a breach happens? In the maturing stage, companies are going to think in these parameters.”

Change Management Needs

Similarly, as Naaznine explained, “AI adoption involves both workforce and cultural considerations. Leaders want to be careful about how rules are evolving, and how talent will be impacted. They want to innovate, but they want to do it responsibly. These considerations are being taken very seriously by organizations. As a result, their AI capabilities are evolving at a slower pace.”

This root cause really hit home for me. During a recent Internal Audit Collective AI Prompt Practice session, session leader Joe Earl (also a leader in the AI Advisory Working Group) boiled it down in similar terms. He said, “This is organizational change management, right? That’s really what it takes, and where the big disconnect is. When you read the reports and studies, everybody did the easy thing: They got folks licenses and said, ’Hey, go save us a half-billion dollars, right?’ But no one had the programs, and [AI implementation] takes a lot of time, energy, and effort. You need to have good data management and processes to be able to apply AI effectively. Not every organization is there.”

Lack of Bandwidth

“The gap isn’t the ambition. It’s the capacity,” said Ashes. “Practically, adoption is low because teams simply don’t have the bandwidth. AI hit us all like a ball from left field, and most auditors haven’t had the space to experiment or repurpose capacity.”

Alan stressed the importance of helping the team carve out adequate time and headspace for experimentation. “Maybe it means cutting a project here and there to help the team feel more comfortable in having time to experiment,” said Alan. Going from project to project in our day-to-day, “Normally we say, ’I don’t have time for that.’ That’s what I heard a lot from my team. So we said, alright, let’s recalibrate now and then, giving you the time you need to experiment.” That also includes resetting expectations with management and the Audit Committee, helping them understand that making meaningful progress in AI implementation likely requires strategic changes to the audit plan.

Dual Nature of the Challenge

Sure, Gen AI has a relatively forgiving learning curve. But as Ashes pointed out, for Internal Audit, there’s also a much steeper learning curve at play: “We’re being asked not only to use AI in our day-to-day work, but also to understand and eventually audit AI risk, whether it be privacy, security, ethics, bias, model risk, all those things. Those are very complex topics where auditors have low confidence,” said Ashes. “These are not concepts most auditors have dealt with or been trained on. The training lags because teams are bandwidth-constrained and the learning curve is steep.”

Our organizations will need our help auditing and advising on AI. Make a strong case for AI, ensuring that management and the Audit Committee know what’s at stake.

Lack of Structured AI Training

Naaznine reinforced the criticality of foundational governance and tools for enabling structured training within each organization. “The pace varies from organization to organization and industry to industry. It depends on the company’s inherent risk-taking ability. That will define how fast people can really structure AI training. Most people are getting experience with AI. But the structured training piece will not happen until companies have governance and enterprise-approved AI tools in place,” explained Naaznine.

As Ashes reinforced, “For Internal Audit, adoption isn’t just about learning to use AI. It’s equally about understanding how to audit the risks created by those tools. And what I’ve seen is most current training programs don’t cover both sides of that equation. We’re all figuring it out as we go.” Unsurprisingly, many teams experience cognitive overload as they try to understand where to focus and how to build formal structures and training that cover both aspects. Accordingly, observed Ashes, “Adoption tends to be more individual, not programmatic. But without a formal structure, it never translates into team-level maturity.”

Looking for a starter plan to help you uplevel your team? Check out Joe Earl’s proven 5-step approach.

Intolerance for Imperfection

As Alan called out, most Internal Audit teams’ cultures aren’t built with the “tolerance for imperfection” integral to AI experimentation. He explained, “The profession’s traditional intolerance for imperfection is a strength in many ways. But it also creates friction when you try to adopt technology that is more probabilistic. So you end up with teams that are curious and motivated, but stuck in an experimentation plateau. They prove that AI can work, but they haven’t crossed the bridge to embedding AI into how they deliver audits day to day.”

To overcome this hurdle, Alan told his team, “Don’t be ashamed of failing. It’s fine if you take two steps forward and one step back, because you then take another step forward.” He set the tone and expectation that even failures constitute positive progress in AI experimentation, because they help provide clarity on what doesn’t work.

In other words, get started already. Experiment with proven prompts other teams use.

Why Are Targeted Use Cases and SOX the Most Common Focus?

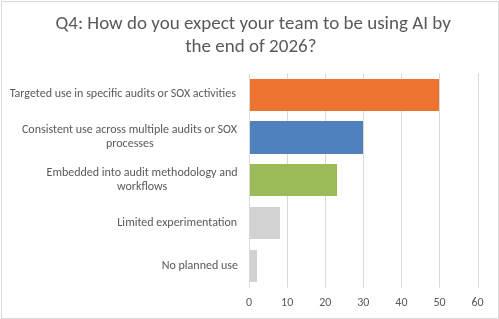

The survey also found that most teams expect to use AI in 2026 on targeted use cases in specific audits or SOX activities.

This answer isn’t exactly surprising. But it’s worth taking this question a level deeper, to help teams just getting started with AI better understand where and how they can begin.

So, why is this the right first choice for most teams? And what have they learned in their pilot projects?

Controlled Environments Reduce Risk

“Targeted use cases allow you to pilot the AI technology in a controlled environment,” said Naaznine. “SOX audits are an ideal starting point. The processes and testing procedures are standardized; the data sets are predictable. The more complexity you involve, the more risk the company is taking on — especially when data is involved.”

Of course, it also remains unclear whether (and to what degree) External Auditors will be comfortable relying on AI-assisted testing.

Quick Wins Build Confidence and Prove ROI

Ashes also sees SOX’s controlled, repeatable processes as “low-hanging fruit” for AI pilots that can drive quick wins and build teams’ confidence. “I think that’s where the focus should be,” said Ashes. “How you build structure is to demonstrate value and show what success looks like. Give the team a reason to believe in the tools and the value. It generates that momentum, and tends to pull adoption forward more effectively than trying to scale everything at once.”

You Can Learn by Doing — and Learn From Mistakes

“If you pick two or three real use cases — like journal entries, testing, control, narrative drafting, risk assessment, and summarization — and have the team actually use AI with coaching guardrails, that helps a lot,” said Alan. Once you have a controlled environment where an AI system is working, you can make that your default and move forward accordingly. This approach also helped them identify AI champions on the team who are further along in their learning. These champions — alongside other AI, analytics, and programming experts tapped from the wider organization — have been integral in supporting overall team development.

Keep in mind, however, that learning to use AI is inevitably an iterative, trial-and-error process. “Creating a feedback loop has been extremely important for us. We learn from each other. We learn from our mistakes. We take note of those mistakes. We know the things we’ve done and the routes we took,” said Alan. “Have your team share what’s working and what’s not on every engagement. So the whole function learns together.”

What Does It Mean to Use AI More Consistently?

Only 17% of respondents report that their current AI usage is “consistent use across multiple audits or SOX processes.” Approximately 27% of respondents expect such usage by the end of 2026.

As I thought about these results, however, I realized: “Consistent use” can mean different things to different people. Ashes, Naaznine, and Alan shared their perspectives.

AI Treated as a Department-Wide Imperative

Said Ashes, “We have to create structure and expectations around how AI will be used in the department. This really depends on the leadership mindset,” said Ashes. If the leader’s position is that AI is an imperative versus a “nice to have,” training gets prioritized.

Innovation won’t happen unless you make it a priority.

Standardization and Alignment That Pave the Way for Scalable Use

“At this point in time, I don’t think it means using AI in every audit step. In simple words, consistency for me is standardization. AI is not optional or ad hoc usage, but a standard, structured component of audit,” said Naaznine. “To me, consistency means you are able to formally embed AI in methodologies within a secure governance environment. For example, if you use AI in SOX testing procedures, it’s reflected in your documentation: as in, use AI in this way for this step. It’s aligned with your quality assurance processes, especially IIA Standards. That makes it consistent usage, which can then be expanded into a bigger use case.”

AI Embedded at Every Stage

“Consistent use means AI isn’t just a special project or add-on — it’s just part of how we should work,” agreed Alan. “In practice, that looks like AI embedded into our audit methodology at every phase. For example, during planning, we should be using AI to analyze the risk universe, scan for prior findings, and draft preliminary scopes. In fieldwork, we should use AI to analyze full populations of data instead of samples, flag anomalies, and summarize large document sets. During reporting, we should be looking at draft observations and bringing in benchmark findings from prior audit reports.” He added, “The human in the loop is extremely important. But AI should be amplifying what one person or one team can accomplish… AI changes the questions you can ask throughout the audit program.”

Alan shared a specific success story from using AI in reporting: His team used ChatGPT to individually tailor reports to the needs, interests, and vocabularies of different stakeholders (e.g., CTO, CFO, CEO, CLO). “It did an amazing job of actually bringing it a step down and saying, ‘This is what matters to anybody in this company. This is why I’m writing to you right now’,” explained Alan.

Balanced Focus

“At the end of the day, consistent AI use is as much about audit enablement as about audit execution,” said Ashes. While current AI usage often focuses on research, brainstorming, summarizing, and drafting, “Applying those same capabilities across all audit projects is what consistency really looks like.”

He also reiterated the need for a holistic approach. “Consistent use comes from building standards, reusable prompts, templates, team expectations on when and how AI should be used to make your day-to-day work easier — and also building the capability to understand and audit the AI risks your organization is introducing,” said Ashes. In this way, audit enablement becomes the starting point for identifying and understanding AI tools and risks, ultimately enabling AI audit execution.

THE LAST WORD: Take Action to Ensure Your Future Relevance

Internal Audit is changing. I firmly believe that Internal Audit’s real AI end game comes down to relevance.

The real question isn’t whether AI can help us work faster.

It’s whether we’re fluent enough to advise on it and make credible recommendations around how it can be used to improve processes, controls, and risk management.

What will you do in 2026 to help your team build the AI fluency critical for ongoing relevance?

Whatever your plan, you can trust that the Internal Audit Collective will keep working on AI prompts, guidance, and other resources to help you move forward. That includes:

- Gen AI “Prompt Practice” Office Hours, where a member demonstrates a prompt and helps you use and troubleshoot the prompt live

- Member-only AI Prompt Library & Template Repository

- WIP Gen AI Playbooks focused on AI prompts for SOX and AI audit & advisory projects

- Under development:

- AI workflow templates

- AI tool of the month showcase

- AI agent showcase and demo days

- AI system and next-gen audit playbooks

- Cross-industry AI audit roundtables

- Cross-functional AI governance roundtables (e.g., Legal, IT, ERM, Internal Audit)

- Quarterly executive AI briefs (executive/AC reporting trends and topics)

But we need your help to make it all happen. Which event or enabler is most exciting to you? Get involved today. And if you haven’t already joined the Collective? You know what to do.

When you are ready, here are three more ways I can help you.

1. The Enabling Positive Change Weekly Newsletter: I share practical guidance to uplevel the practice of Internal Audit and SOX Compliance.

2. The SOX Accelerator Program: A 16-week, expert-led CPE learning program on how to build or manage a modern & contemporary SOX program.

3. The Internal Audit Collective Community: An online, managed, community to gain perspectives, share templates, expand your network, and to keep a pulse on what’s happening in Internal Audit and SOX compliance.